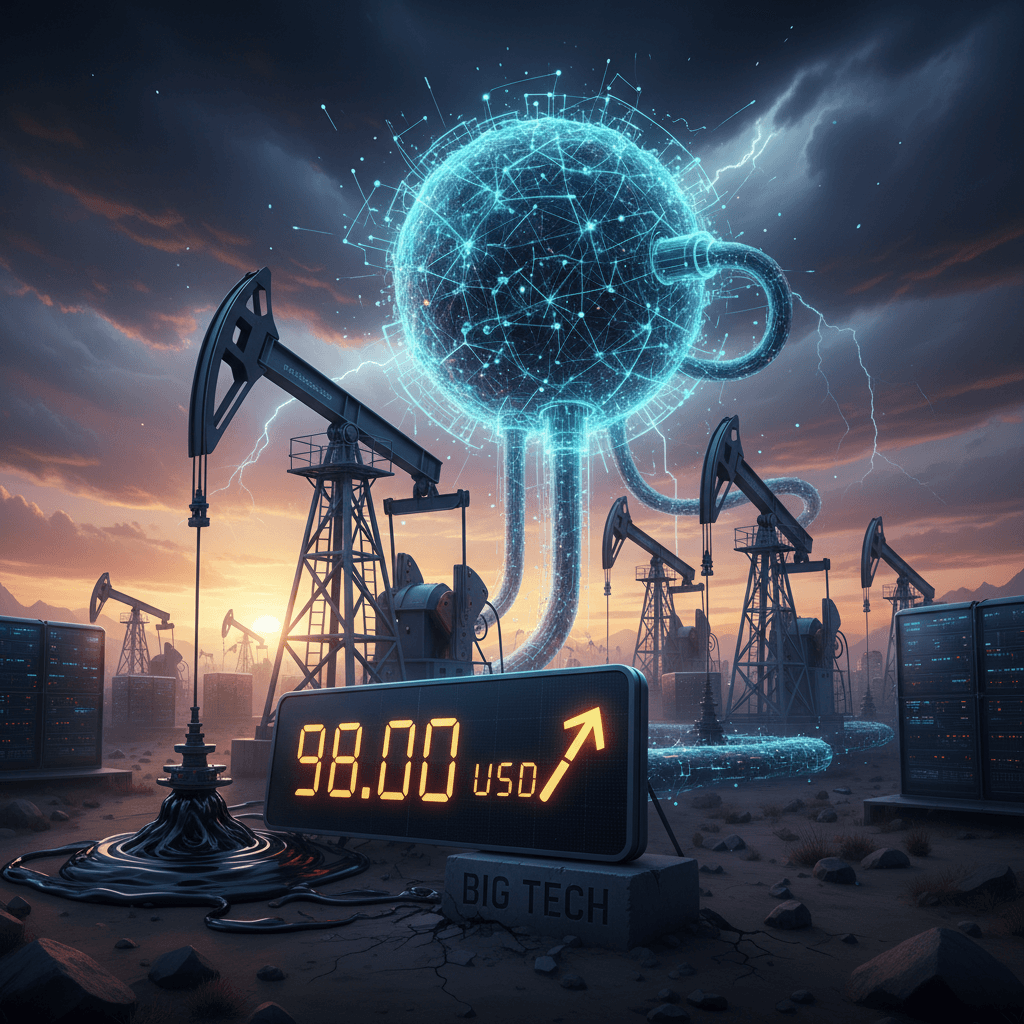

Everyone’s looking at Riyadh and Vienna for answers on why Brent crude is suddenly flirting with $98 a barrel. They’re wrong. The real story isn’t in an OPEC+ meeting; it’s humming away inside a new data center in rural Ohio, consuming the same amount of power as 80,000 homes just to train a single AI model.

For the past year, we’ve been drowning in breathless demos of generative AI. It’s been a magic show. We focused on the slick user interface, the seemingly infinite capabilities, and the multi-trillion-dollar valuations. We completely ignored the man behind the curtain—the one shoveling megawatts into the furnace like coal into a steam engine. That furnace is now setting the global price of oil.

This isn't a temporary supply shock. This is a permanent, structural demand shock delivered by an industry that, until now, has lived almost entirely in the ethereal world of software. The physical world is finally sending an invoice, and it's got a lot of zeros on it.

What Is Brent Oil and Why Does It Suddenly Matter to Tech?

Let’s get the basics out of the way. Brent Crude is one of the main global benchmarks for oil prices. It’s sourced from the North Sea and, as Wikipedia will tell you, its price influences a huge chunk of the world's oil contracts. For most of my career, its daily fluctuations were background noise—something for the finance guys, not the people writing code.

That's changed. Why? Because every line of Python code that calls a large language model, every API request to generate an image, every query you type into a chatbot—it all ends up as a CPU/GPU cycle somewhere. And that cycle requires electricity. Lots of it.

For decades, tech’s energy footprint grew linearly. But the AI arms race of 2024-2026 has bent that curve into a hockey stick. A recent report from the International Energy Agency (IEA) projects that data centers could consume over 1,000 terawatt-hours by the end of 2026, roughly equivalent to the entire electricity consumption of Japan. The scary part? That forecast has already been revised upwards twice.

When you create that much new, non-negotiable demand for energy, you put a floor under the price of every energy source. And since natural gas prices (a key source for electricity generation) are often linked to oil prices, a spike in Brent crude now flows directly to the utility bills for Amazon’s AWS, Microsoft’s Azure, and Google’s Cloud Platform. Which means it flows directly to your startup’s monthly burn rate.

The Official Narrative Is a Convenient Distraction

Flip on any financial news network and you'll hear the same tired explanations. They’ll point to renewed production cuts from OPEC+, a minor disruption in a Nigerian pipeline, or geopolitical posturing in the South China Sea. And sure, those things add a few dollars of "risk premium" to the price.

But that's the sideshow. The main event is the colossal, unplanned energy feast being consumed by Big Tech. In their Q4 2025 earnings calls, both Microsoft and Amazon’s leadership talked about "significant capital expenditures to build out our AI infrastructure." What they didn't put in the headline was that their energy costs jumped 40% year-over-year, far outpacing their customer growth. This is the critical detail everyone seems to be missing, the same way global funds are now spooked by these hidden costs.

“We are optimizing our data center architecture for a new era of computing,” a Google Cloud spokesperson told reporters last month. It’s the kind of polished PR-speak I’ve heard a thousand times at product launches. What it really means is: “We have no idea how to power all these GPUs, and it’s costing us a fortune.”

The numbers are staggering. Training a model like GPT-4 was estimated to have consumed around 1.3 gigawatt-hours. The next generation of models, rumored to be in training now, are expected to require 5-10x that amount. That’s not a software problem; it’s a physics problem. And physics doesn't care about your valuation.